The security industry is racing to integrate AI into their penetration testing services. Before you accept an AI-powered assessment, there are questions your vendor probably isn’t answering, and may not have even asked themselves.

The promise of AI pentesting

Artificial intelligence has arrived in the penetration testing industry with considerable noise. Vendors are promising faster testing cycles, broader coverage, a greater number of findings, lower costs, and pentest reports that practically write themselves. For organisations that are under pressure to demonstrate security assurance without expanding their budgets, the sales pitch and promise of security is compelling.

Some of it is legitimate. AI tools can be genuinely useful for experienced testers by accelerating reconnaissance, processing large volumes of scan data and enriching the results, or even assisting with report drafting. Used as a method to supplement the skills and knowledge of security consultants, AI can easily improve the efficiency and output of an engagement without compromising its quality.

But a growing number of vendors are selling something different: AI as a replacement for manual testing, and not a supplement to it. Automated tooling that compromises systems, traverses networks, identifies vulnerabilities, and produces a report. All with minimal human involvement in the actual testing.

These tools range from guided step-by-step assistants that suggest techniques, to tools that invoke Kali Linux utilities automatically based on discovered findings. It’s faster, it’s cheaper, and it looks like a penetration test.

The question is whether it provides meaningful security assurance and whether it introduces risks that didn’t exist before.

What AI gets right, and what it misses

To be fair to the technology, which is incredible at this point in time, AI-assisted tooling provides genuine strengths in specific areas of penetration testing.

Reconnaissance and attack surface mapping both benefit from AI’s ability to process and semantically associate large datasets, much quicker than a human review. Vulnerability scanning and known CVE identification can be accelerated as part of this enrichment too. Report generation with appropriate remediation guidance can be a legitimate productivity application.

There are open-source tools that also benefit from the inclusion of AI tooling, which can automate the creation of vulnerability detection from a simple prompt and the context of the issue. This ultimately stems from LLM training on a massive amount of code-bases (but there are separate concerns with that). For standard, well-known types of vulnerabilities, AI tooling can perform reasonably well.

AI-assisted static analysis in code reviews is a good example of the technology being applied appropriately. The full context of the code is apparent and the AI can operate on known inputs, in a controlled environment, with the result (hopefully) being a human review of the output.

However, gaps can emerge in areas that can and should matter most to organisations:

Contextual business logic vulnerabilities are typically the most difficult category for traditional automated tooling to identify, but this can be less of an issue when an AI has a detailed model of how an application is intended to behave. It’s still not equivalent to the ability for a human tester to comprehend the intended functionality or raise questions mid-engagement.

Chained vulnerabilities are where experienced testers identify findings that justify the cost of a serious engagement. Three individually low to medium severity issues, combined in the right sequence, could result in a critical exploit path that gives an attacker complete access to a system. Identifying that chain requires lateral thinking, contextual judgment, and the kind of creative adversarial reasoning that current AI tools may not currently replicate reliably without the same level of information as a human-led tester.

Where context doesn’t help is novel attack techniques. However rich its environmental context, an AI tool cannot identify a vulnerability it hasn’t encountered in training. The most significant findings in any engagement are often the ones that require creative thinking about the specific environment. That remains human territory, but not because AI is incapable of creativity in principle, but because the unpredictability and novelty of real-world attack surfaces outpaces what any model that’s trained on historical data can reliably anticipate.

The honest picture is a bit more subtle than either AI advocates or sceptics may acknowledge. Context-informed AI tooling, when used by experienced testers that understand its limitations, can produce better and more consistent coverage than either approach alone in some scenarios.

The risk is not explicitly AI, it is AI presented as a turnkey replacement for human judgment that’s deployed without transparency, and then sold to buyers who don’t know the right questions to ask.

The question nobody is asking: Where does your data go?

This is the concern that pentest vendors and AI pentest software providers don’t seem to address, and presumably what most organisations may not even have visibility of.

When an AI pentest tool has successfully compromised a system during a penetration test, such as gaining access to a server, elevating privileges, and moving laterally through a network, it typically needs to process what it finds in order to determine the next steps. This could be the contents of a file, database records, user credentials (plaintext or otherwise), and Active Directory schemas.

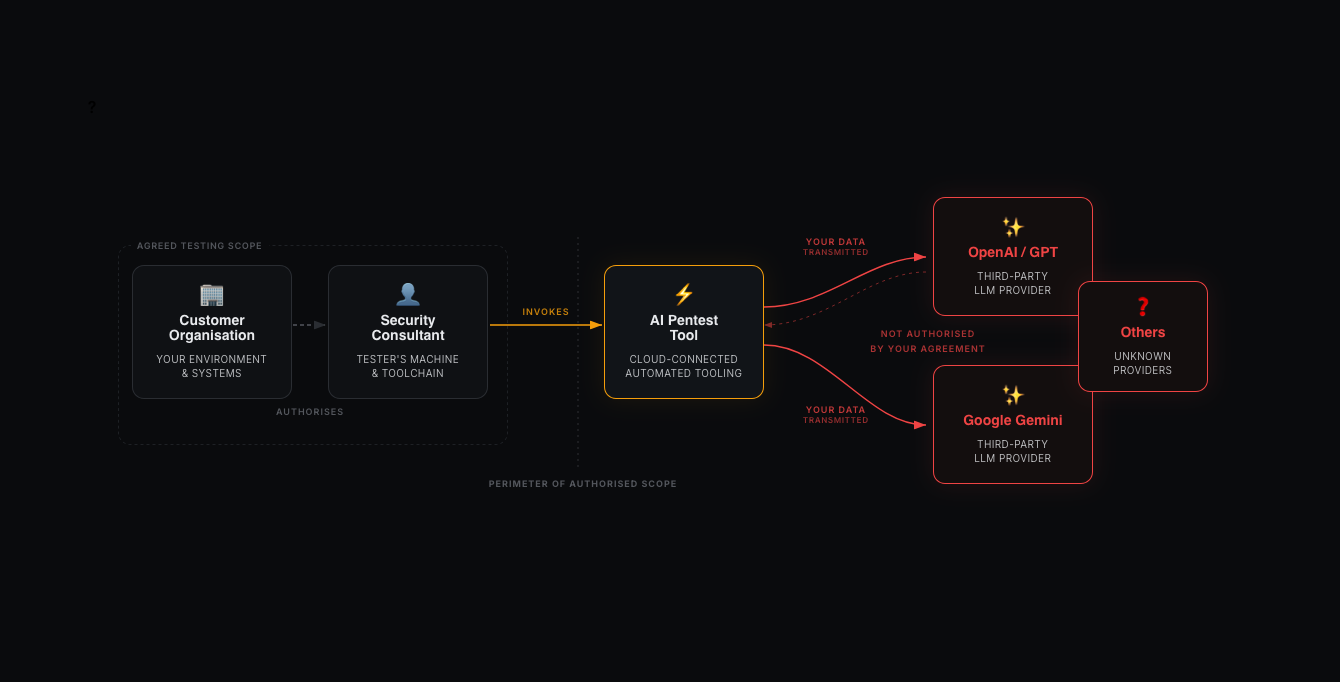

If the tool is based on cloud-provided LLMs (and I’d suspect most of them are), then that data is being sent to an external AI provider to be processed. This leaves your organisation environment and is processed by a third-party model: OpenAI, Anthropic Claude, Google Gemini, or whichever platform the pentest provider has chosen to build their tooling on.

Your penetration testing service agreement authorises the tester to access your systems. It likely does not authorise the contents of those systems to be transmitted to third-party AI providers.

For organisations in regulated industries, such as financial services, healthcare, legal, or public sector, the consequences of undisclosed data transmission through a security engagement could be severe. The security testing industry has been built over time on a layer of trust, with many certification bodies having strict requirements for data handling, processing, and storage of “client” data as part of the penetration testing methodology.

The question that every organisation should be asking of their penetration testing provider before signing a contract is simple: does your tooling make external API calls during testing, and if so, what data is transmitted and where? If the vendor cannot answer that question clearly, then that itself is a meaningful answer.

The compromised toolchain problem

During a penetration test, the security consultant’s system is often in a uniquely privileged position. The rules of engagement can often mean that there is a reduced monitoring setup in place (an exception being red-team engagements), and alerts that may normally be raised as a result of a potential attack are suppressed for the duration of testing and for the tester’s IPs/system.

In some cases, network access controls may also be relaxed to allow for mass vulnerability scanning or other types of allowed testing. This is often necessary for balancing the time that’s allocated to the engagement with realistic testing scenarios. It is also an element of trust in the ability of the tester and the pentest provider’s testing methodology.

If the AI pentest tool being run by the security consultant is compromised in any way, then the tester’s system becomes an attack vector in the organisation’s environment.

Supply chain attacks across software development have been rising over the last 6-12 months, with the most recent examples being through NPM, and similar concerns have been raised about AI-assisted developer tooling.

With many of the tools that testers and pentest companies are utilising, these are often open-source (or in the case of SaaS AI pentesting, developed in-house and likely using open-source libraries). A malicious update to one of these tools or library dependencies could introduce malicious capability and skills into the AI that the customer organisation has implicitly trusted as part of the pentest process.

Prompt injection is, of course, particularly relevant to AI tools that process external untrusted content as part of their pipeline. When an AI pentest tool reads file contents, parses web application or API responses, or processes the data discovered during testing, it is potentially executing instructions embedded in that content.

Customer organisations, or potential malicious parties who anticipated a future pentest, could embed prompt injection payloads in files or HTTP responses that are designed to manipulate LLM behaviour.

This isn’t a novel idea, as many businesses often run their own internal or external honeypots as a standard process, or even to catch-out pentesters during an assessment. Where this is new is that the impact could be significant with bypassing the AI tool’s guardrails (if there are any), and the pentester may have no visibility of what the AI has been instructed to do.

Destructive scenarios during penetration tests are the last thing that a pentest provider or security consultant would want to happen, and clearly the customer organisation would not want this either.

There are documented cases in adjacent contexts where AI embedded tooling, such as in a development pipeline, where full production environments have been deleted. Other examples include email mailboxes that have been purged, with no emergency stop appearing to halt the process.

All it would take for this to be a reality during a penetration test is an incorrect prompt being coded into the tool, or as mentioned a potential prompt injection attack (whether intentional or not). Imagine what could happen if an AI pentest tool had compromised a Domain Admin account, started deleting user accounts, pivoted to Microsoft SQL servers and then overwrote or deleted customer data?

What responsible AI assisted testing could look like

To be clear, this article is not an argument against AI in penetration testing. It is an advocate for transparency, disclosure, and human-led testing oversight. A responsible approach to AI tooling in a penetration testing engagement should, at the very least, include:

A clear disclosure to the customer organisation of what AI tools are being used, whether they make external API calls, and what data (category/type) may be processed externally. This should be part of the service agreement and not buried in a privacy policy.

Explicit consent for any use of external AI platforms for processing the customer organisation’s data. The cloud AI provider’s privacy policy and terms of service may not align with the customer’s requirements, so this should be considered too.

A human review of all AI generated actions before they are executed in the organisation’s environment. This is particularly vital when any action could have the potential for destructive or irreversible consequences.

Visibility of the AI tool’s reasoning or action plan. The security consultant should be able to see and validate what the tool is doing, and potentially halt operations when necessary.

Locally hosted LLMs are the ideal compromise where the customer has not explicitly consented to cloud-based AI models, but it is understandable that the processing power to operate local models and the breadth of training data that open-source models were built on doesn’t compare to the larger players in the AI field.

Questions to ask your pentest provider

If you are procuring penetration testing and are evaluating a pentest provider that offers AI assisted testing, or even if they don’t appear to offer this and potentially action this sort of tooling behind-the-scenes, consider asking the following questions:

- Do you utilise AI tooling as part of your penetration testing methodology?

- Does your tooling make any external API calls during testing? If so, to which providers?

- Is your AI tooling open-source, and if so how do you manage dependency and supply chain risk?

- What data may be transmitted externally during an engagement?

- Do you have a data processing agreement with your AI provider?

- How is human oversight maintained with AI generated actions during testing?

- What controls prevent prompt-injection attacks against your AI tooling during an engagement?

- What is your incident response process if your tooling behaves unexpectedly during an engagement?

If a vendor cannot answer these questions clearly, or there are any concerns with the answers themselves, you should treat their service offering carefully regardless of how compelling their marketing is.

Final thoughts on AI penetration testing

The penetration testing industry exists to provide organisations with an honest, independent assessment of their security posture. That assurance depends on the basis of trust; trust that the pentester will act on their testing delivery within the agreed boundaries, and that their actions will have minimal to no impact on the state of the organisation’s environments outside of what is agreed with the rules of engagement for exploiting vulnerabilities.

AI tooling has a legitimate place in security research, threat intelligence, and internal tooling. When used responsibly, it could benefit both the customer and the pentest provider through efficiency and the potential for discovering a greater number of weaknesses in that testing window. That place is not in an unsupervised, opaque, automated loop with access to a customer’s production environment.

Consultants continually train and educate themselves on both older technologies and any new, emerging software, systems, and protocols. During a manually-led penetration test there will be many instances where “automated” tooling is used, but this is invoked manually with specific input and full control over what is being run and when.

The irony of an AI powered penetration test that creates a security breach would be incredible. It would also be entirely avoidable.

Have questions about how Exploitr approaches tooling, methodology, and data handling during engagements? Get in touch – we’re happy to answer every one of those questions before you sign anything. We don’t use AI to deliver your pentest.